Give your ElevenLabs voice agent a face

ElevenLabs builds some of the best voice AI available. Their Conversational AI platform handles speech recognition, LLM reasoning, and voice synthesis in a single pipeline. Millions of developers use it.

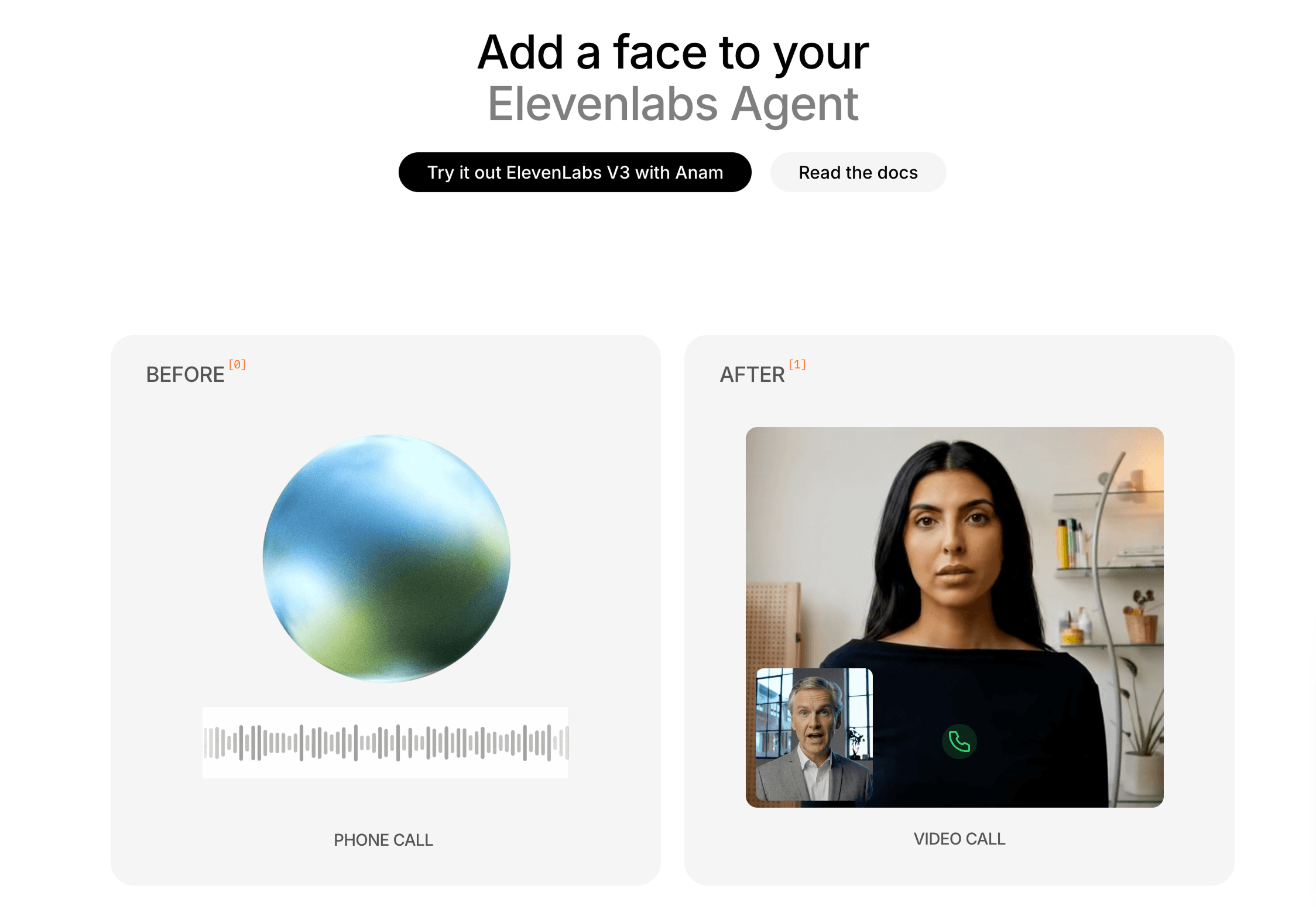

But when a user talks to one of these agents, there's nothing to look at. A waveform, maybe. A chat window. The voice is there. The face isn't.

That gap costs more than you'd think. 70% of users prefer interacting with an interactive avatar over a voice-only agent. People talk longer, complete more flows, and build trust faster when there's a face on the other side.

Anam now has a native integration with ElevenLabs. If you've already built a Conversational AI agent, you can add a real-time avatar without touching your existing setup.

How it works

ElevenLabs handles the voice. Anam handles the face. They connect through Anam's audio passthrough mode.

Your ElevenLabs agent's audio output streams into Anam's face generation pipeline. The Cara model takes that audio and generates a photorealistic face in real time: lip sync, head movement, eye gaze, and facial expressions, all derived from the audio signal. The avatar responds in under a second.

Nothing about your ElevenLabs agent changes. Your prompts, tools, knowledge bases, and conversation logic stay exactly as they are. You pass in your ElevenLabs agent ID and session token when starting an Anam session, and you get a face.

The full integration cookbook walks through setup step by step. It takes about 10 minutes.

What changes for your users

This isn't a cosmetic upgrade. The way people interact with an agent shifts when there's a face.

Users talk longer. They're more willing to restart when something goes wrong instead of abandoning the flow. They describe the experience differently. One company running A/B tests found that users called the avatar interaction the "quickest route to comfort" compared to text and voice-only alternatives.

Think about the use cases where ElevenLabs agents already work well, and consider what a face adds:

Customer support. A voice agent resolves the ticket. An interactive avatar resolves it while making the user feel heard instead of handled. That's the difference between a resolved ticket and a retained customer.

Sales and onboarding. Trust matters in the first interaction. A visible agent converts better than an invisible one. Users who can see who they're talking to are more likely to complete an onboarding flow or book a follow-up.

Training and coaching. Long sessions need sustained attention. A face holds it. Coaches and tutors who've added avatars report that users stay engaged through sessions that would otherwise see high drop-off.

Language learning. Lip movement is a teaching tool. Learners watching a mouth form words pick up pronunciation faster than those only hearing audio. An interactive avatar gives your tutoring agent a mouth worth watching.

Getting started

You need three things: an ElevenLabs Conversational AI agent, an Anam account, and a few lines of code.

The integration cookbook covers setup, code examples, and configuration options. You can test on Anam's free tier.

If you're already building agents with other frameworks, the same pattern applies. Anam's avatar API is agent-agnostic. We have similar integrations with Pipecat, VideoSDK, and anything else that produces audio output. The face layer is independent of the brain behind it.

ElevenLabs is the voice of AI. Anam is the face.

Frequently Asked Questions

What does the Anam + ElevenLabs integration actually do?

It adds a real-time, photorealistic avatar face to your existing ElevenLabs Conversational AI agent. Anam's Cara model generates lip sync, head movement, eye gaze, and expressions in sync with your agent's audio — while ElevenLabs continues handling speech, reasoning, and synthesis unchanged.

How much code do I need to change?

Minimal. Your existing ElevenLabs agent configuration stays the same. The integration uses Anam's audio passthrough mode and can be set up in around 10 minutes using their integration cookbook.

Does the avatar add noticeable latency?

No. The avatar responds in under one second, so the face stays in sync without a perceptible delay in the conversation.

Is this only for ElevenLabs, or does it work with other frameworks?

It's not exclusive to ElevenLabs. Anam offers similar integrations with Pipecat, VideoSDK, and other audio-producing frameworks, positioning avatars as a framework-agnostic layer you can add to any voice agent stack.

Explore more articles

© 2026 Anam Labs

HIPAA & SOC-II Certified