Changelog

Client-side context injection

Client-side context injection

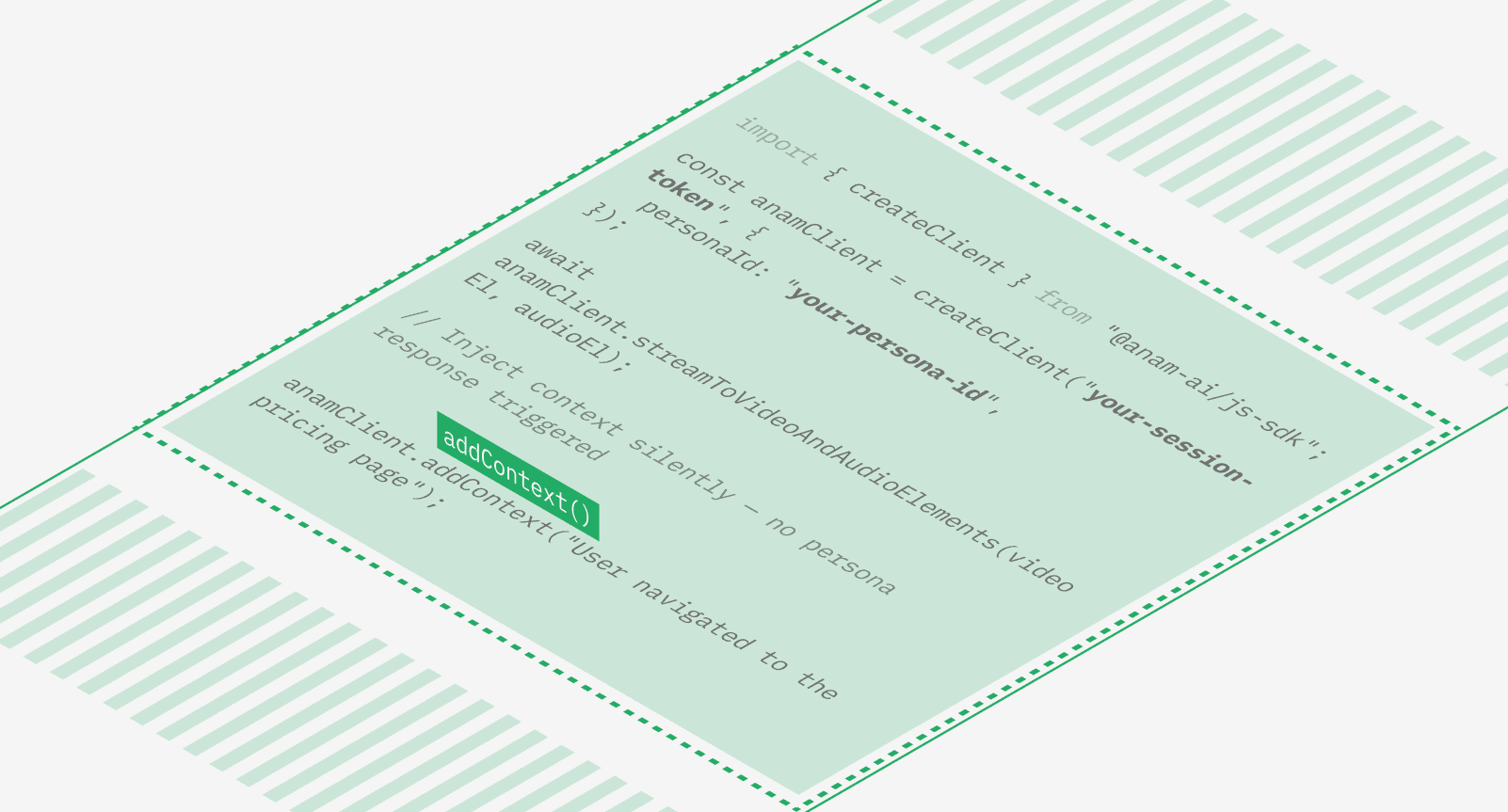

You can now inject context into a conversation without triggering a persona response. Call addContext() in the JavaScript SDK to silently append information — like CRM data, page navigation events, or real-time application state — to the conversation history. The persona won’t respond immediately, but will have that context available the next time the user speaks.This is useful for building context-aware agents that adapt to what the user is doing in your application without interrupting the conversation flow.

User speech detection events

Both the Python and Javascript SDK now emit userSpeechStarted and userSpeechEnded events the moment voice activity is detected, before any transcription is available. Use these to build responsive “listening” indicators and other UI feedback that reacts instantly when the user begins or stops speaking.

Lab changes

[6]

Improvements

Voice cloning for all paid plans: Custom voice cloning is now available to Explorer and Growth plans, previously limited to Professional and Enterprise.

Share and embed redesign: Share links and embed widgets have been consolidated into a simpler 1-to-1 model with a cleaner management interface.

Persona tools via API: The PUT persona endpoint now accepts a

toolfield, allowing you to attach tools to personas programmatically.

Fixes

Fixed one-shot avatar refinement timing out by making Gemini refinement non-fatal with a 35-second timeout.

Fixed knowledge upload endpoints not accepting Bearer API key authentication.

Fixed end-session race conditions with idempotent endpoint and atomic updates.

Persona changes

[3]

Improvements

Conversation context accuracy: A new message history system tracks which text was actually spoken versus interrupted, and records tool call arguments and results. The persona now maintains accurate context after interruptions, leading to more coherent multi-turn conversations.

Audio passthrough stability: Late-arriving audio in BYO TTS sessions no longer causes unintended interruptions. Audio is buffered and played back in order, improving reliability for Pipecat and other audio passthrough integrations.

Fixes

Fixed stale video frames occasionally appearing after a response completes.

API changes

[2]

Improvements

Context injection: New

addContext()method lets you inject context into the conversation history without triggering a response (JS SDK v4.11.0).Speech detection events:

userSpeechStartedanduserSpeechEndedevents fire at the VAD level for instant speech detection (JS SDK v4.12.0).

Adaptive bitrate streaming and Zero Data Retention mode

Adaptive bitrate streaming

Anam now dynamically adjusts video quality based on network conditions. When bandwidth drops, the stream adapts in real time to maintain smooth, uninterrupted video rather than freezing or dropping frames. When conditions improve, quality scales back up automatically. This is a significant improvement for users on mobile networks, VPNs, or connections with variable bandwidth.

Zero Data Retention mode

Enterprise customers can now enable Zero Data Retention on any persona. When enabled, no session data — recordings, transcripts, or conversation logs — is stored after a session ends. This applies across the full pipeline including voice and LLM data.Toggle it on from persona settings in the Lab, or set it via the API. Learn more.

Lab changes

[6]

Improvements

System tools: Personas can now use built-in system tools.

change_languageswitches speech recognition to a different language mid-conversation, andskip_turnpauses the persona from responding when the user needs a moment to think. Enable them from the Tools tab in Build.Tool validation: Auto-deduplication of tool names with clearer validation error messages.

Share link management: Migrated share links to a 1-to-1 primary model with a simpler toggle interface.

Fixes

Fixed reasoning model responses getting stuck in “thinking…” state.

Fixed soft-deleted knowledge folders not restoring on document upload.

Fixed LiveKit session type classification for snake_case environment payloads.

Persona changes

[7]

Improvements

Agora AV1 support: Agora integration now supports the AV1 video codec for better compression and quality at lower bitrates.

Multi-agent LiveKit: Audio routing now works correctly in multi-agent LiveKit rooms with multiple Anam avatars.

Fixes

Fixed tool enum type validation.

New Integrations: Elevenlabs, Framer, Videosdk, Pipecat

Four new ways to use Anam avatars in your stack:

Pipecat

The pipecat-anam package brings Anam avatars to Pipecat, the open-source framework for voice and multimodal AI agents. pip install pipecat-anam, add AnamVideoService to your pipeline, and you’re streaming. Use audio passthrough for full control over your own orchestration, or let Anam handle the pipeline end-to-end. GitHub repo.

ElevenLabs server-side agents

Put a face on any agent you’ve built in ElevenLabs. Pass in your ElevenLabs agent ID and session token when starting a session, and Anam handles the rest, no changes to your existing ElevenLabs setup needed. Cookbook.

VideoSDK

Anam is now officially supported on VideoSDK, a WebRTC platform similar to LiveKit. Built on top of the Python SDK.

Framer

The Anam Avatar plugin is now on the Framer Marketplace. Drop an avatar into any Framer site without writing code.

Metaxy: sample-level versioning for ML pipelines

We wrote up a deep dive on Metaxy, our open-source metadata versioning framework for multimodal data pipelines. It tracks partial data updates at the field level so teams only reprocess what actually changed. Works with orchestrators like Dagster, agnostic to compute (Ray, DuckDB, etc.). GitHub.

Lab changes

[4]

Improvements

• Build page redesign: Everything lives in Build now. Avatars, Voices, LLMs, Tools, and Knowledge are tabs within a single page. Create custom avatars, clone voices, add LLMs, and upload knowledge files without leaving the page. Knowledge is a file drop on the Prompt tab: upload a document and it’s automatically turned into a RAG tool.

• Knowledge base improvements: Non-blocking document deletion with pending state and rollback on error. PDF uploads restored. Stuck documents are auto-detected with retry from the UI.

Fixes

• Fixed typo in thinking duration display.

• Fixed sticky hover states on touch devices.

Persona changes

[7]

Improvements

• Smart voice matching: One-shot avatars now auto-select a voice matching the avatar’s detected gender.

• Mobile improvements: Tables replaced with cards and lists. Bottom tab bar instead of hamburger menu. Long-press context menus on persona tiles. Touch-friendly tooltips.

• Video stability: New TWCC-based frame-drop pacer with GCC congestion control. Smoother video on constrained or variable-bandwidth connections.

• Network connectivity: TURN over TLS for ICE, improving session establishment behind corporate firewalls and VPNs.

Fixes

• Fixed ElevenLabs pronunciation issues with certain text patterns.

• Fixed text sanitization causing incorrect punctuation in TTS output.

• Fixed silent responses not being detected correctly.

API changes

[3]

Improvements

Tool call event handlers:

onToolCallStarted,onToolCallCompleted, andonToolCallFailedhandlers for tracking tool execution on the client.Documents accessed:

ToolCallCompletedPayloadnow includes adocumentsAccessedfield for Knowledge Base tool calls.

Fixes

Fixed duplicate tool call completion events.

Introducing Anam Python SDK

Anam Python SDK

Anam now has a Python SDK. It handles WebRTC streaming, audio/video frame delivery, and session management.What’s in the box:

Media handling — The SDK manages WebRTC connections and signalling. Connect, and you get synchronized audio and video frames back.

Multiple integration modes — Use the full pipeline (STT, LLM, TTS, Face) or bring your own TTS via audio passthrough.

Live transcriptions — User speech and persona responses stream in as partial transcripts, useful for captions or logging conversations.

Async-first — Built on Python’s async/await. Process media frames with async iterators or hook into events with decorators.

People are already building with it — rendering ascii avatars in the terminal, processing frames with OpenCV, piping audio to custom pipelines. Check the GitHub repo to get started.

Lab changes

[1]

Improvements

Visual refresh: Updated Lab UI with new brand styling, including new typography (Figtree), refreshed color tokens, and consistent component styles across all pages.

Persona changes

[4]

Improvements

ICE recovery grace period: WebRTC sessions now survive brief network disconnections instead of terminating immediately. The engine detects ICE connection drops and holds the session open, allowing the client to reconnect without losing conversation state.

Language configuration: You can now set a language code on your persona, ensuring the STT pipeline uses the correct language from session start.

Fixes

Voice generation options: Added configurable voice generation parameters for more control over TTS output

ElevenLabs streaming: Removed input buffering for ElevenLabs TTS, reducing time-to-first-audio for all sessions using ElevenLabs voices.

Anam is now HIPAA compliant

A big milestone for our customers and partners. Anam now meets the standards required for HIPAA compliance, the U.S. regulation that protects sensitive health information. This means healthcare organizations and companies handling medical data can use Anam with confidence that their data is protected and processed securely.

What HIP compliance means.

HIPAA (Health Insurance Portability and Accountability Act) sets national standards for safeguarding medical information. Compliance confirms that Anam maintains strict administrative, physical, and technical safeguards, covering how data is stored, encrypted, accessed, and shared.

An independent assessment verified that Anam’s systems and policies meet the HIPAA Security Rule and Privacy Rule requirements.

Security is built into Anam.

Security has been a core principle since day one. Achieving HIPAA compliance reinforces our commitment to keeping your data private and secure while ensuring reliability and transparency for regulated industries.

Access our Trust Center.

You can review our security policies, data handling procedures, subprocessors, and compliance documentation, including our HIPAA attestation, at the Anam Trust Center.

Lab changes

[6]

Improvements

Enhanced voice selection

You can now search voices by your use case or conversational style! We also support 50+ languages that can now be previewed in the lab all at once.

Product tour update

We updated our product tour to help you find the right features and find the right plans for you.

Streamlined One-Shot avatar creation.

Redesigned one-shot flow with clearer step progression and enhanced mobile responsiveness.

Naming personas is now automatic.

Auto-generated new persona names based on selected avatar.

Session start time.

Expected improvement by 1.1 sec for each session start up time.

Fixes

Share links.

Fixed share-link sessions taking extra concurrency slots.

Persona changes

[6]

Improvements

Improve tts pronunciation.

Improve tts pronunciation for all languages by adapting our input text chunking.

Traceability and monitoring of session IDs.

Send session IDs through all LLM calls to improve traceability and monitoring.

Increased audio sampling rate.

Internal Audio Sampling Rate increased from 16khz to 24khz sampling rate, allowing even more amazing audio for Anam Personas.

Websocket size increase.

Increased the maximum websocket size for larger talk stream chunks (from 1Mb to 16Mb).

Fixes

Concurrency calculation fix.

Fixed concurrency calculation to only consider sessions from last 2 hours.

Less freezing for slower LLMs

Slower LLMs will now result in less freezing, but shorter "chunks" of speech.

Session recordings available through API

By default, every session is now recorded and saved for 30 days. Watch back any conversation in the Lab (lab.anam.ai/sessions) to see exactly how users interact with your personas, including the full video stream and conversation flow.Recordings and transcripts are also available via API. Use GET /v1/sessions/{id}/transcript to fetch the full conversation programmatically for analytics, QA, or archival. See here: https://docs.anam.ai/api-reference/get-session-recording

For privacy-sensitive applications, you can disable recording in your persona config.

Two-pass avatar refinement

One-shot avatar creation now refines images in two passes. Upload an image, and the system generates an initial avatar, then refines it for better likeness and expression. Available to all users.

Lab changes

[6]

Improvements

Added speechEnhancementLevel (0-1) to voiceDetectionOptions for control over how aggressively background noise is filtered from user audio

Support for ephemeral tool IDs, so you can configure tools dynamically per session

Added delete account and organization buttons

Fixes

Fixed terminology on tools tab

Fixed RAG default parameters not being passed

Fixed custom LLM default settings

Persona changes

[7]

Improvements

Support for Gemini thinking/reasoning models

The speechEnhancementLevel parameter now passes through via voiceDetectionOptions

Engine optimizations for lower latency under load

Fixes

Fixed GPT-5 tool calls returning errors

Fixed audio frame padding that could cause playback issues

Fixed repeated silence messages

Fixed silence breaker not responding to typed messages

AI-Coustics and Reasoning Model Suppport

User Speech Enhancement

We’ve integrated ai-coustics as a preprocessing layer in our user audio pipeline. It enhances audio quality before it reaches speech detection, cleaning up background noise and improving signal clarity in real-world conditions. This reduces false transcriptions from ambient sounds and improves endpointing accuracy, especially in noisy environments like cafes, offices, or outdoor settings.

Configurable Persona Responsiveness

Control how quickly your persona responds with voiceDetectionOptions in the persona config:

endOfSpeechSensitivity (0-1): How eager the persona is to jump in. 0 waits until it’s confident you’re done talking, 1 responds sooner.

silenceBeforeSkipTurnSeconds: How long before the persona prompts a quiet user.

silenceBeforeSessionEndSeconds: How long silence ends the session.

silenceBeforeAutoEndTurnSeconds: How long a mid-sentence pause waits before the persona responds.

Reasoning Model Support

Added support for OpenAI reasoning models and custom Groq LLMs. Reasoning models can think through complex scenarios before responding, while Groq’s high-throughput infrastructure makes these typically-slower models respond with conversational latencies suitable for real-time interactions. Add your reasoning model in the lab: https://lab.anam.ai/llms.

Persona changes

[2]

Fixes

Fixed Knowledge Base (RAG) tool calling with proper default query parameters

Fixed panic crashes when sessions error during startup

Lab changes

[2]

Fixes

Fixed Powered by Anam text visibility when watermark removal is enabled

Updated API responses for GET/UPDATE persona endpoints

API changes

[2]

Improvements

Introduced agent audio input streaming for BYO audio workflows, allowing you to integrate with arbitrary voice agents, eg ElevenLabs agents. See docs on how to integrate.

Added WebRTC reasoning event handlers for reasoning model support

Introducing Cara 3: our most expressive model yet

The accumulation of over 6 months of research, Cara 3 is now available. This new model delivers significantly more expressive avatars featuring realistic eye movement, more dynamic head motion, smoother transitions in and out of idling, and improved lip sync.

You can opt-in to the new model in your persona config using avatarModel: 'cara-3' or by selecting it in the Lab UI. Note that all new custom avatars will use Cara 3 exclusively, while existing personas will continue to use the Cara 2 model by default unless explicitly updated.

SOC-2 Type II compliance

Anam has achieved SOC-2 Type II compliance. This milestone validates that our security, availability, and data protection controls have been independently audited and proven over time.For customers building across learning, enablement, or live production use cases, this provides formal assurance regarding how we handle security, access, and reliability.

Visit the Trust Center

Integrations

Model Context Protocol (MCP) server

Manage your personas and avatars directly within Claude Desktop, Cursor, and other MCP-compatible clients. Use your favorite LLM-assisted tools to interact with the Anam API.

Anam x ElevenLabs agents

Turn any ElevenLabs conversational AI agent into a visual avatar using Anam’s audio passthrough.

Watch the

Lab changes

[4]

Improvements

UI overhaul. A redesigned Homepage and Build page make persona creation more intuitive. You can now preview voices/avatars without starting a chat and create custom assets directly within the Build flow. Sidebar and Pricing pages have also been refreshed.

Performance. Implemented Tanstack caching to significantly improve Lab responsiveness

Fixes

Bug fix for client tool events that were not appearing in the Build page chat

Resolved an issue where tool calls and RAG were not passing parameters correctly.

Persona changes

[6]

Improvements

More Voices. Added ~100 new Cartesia voices (Sonic-3) and ~180 new ElevenLabs voices (Flash v2.5), covering languages and accents from all over the world.

New default LLM. Kimi-k2-instruct-0905 is now available. This SOTA open-source model offers high intelligence and excellent conversational abilities. (Note: Standard kimi-k2 remains recommended for heavy tool-use scenarios).

Configurable greetings. Added skip_greeting parameter, allowing you to configure whether the persona initiates the conversation or waits for the user.

Latency Reductions. STT optimization: We are now self-hosting Deepgram for Speech-to-Text, resulting in a ~30ms (p50) and ~170ms (p90) latency improvement. Frame buffering: Optimized output frame buffer, shaving off an additional ~40ms of latency per response.

Fixes

Corrected header handling to ensure reliable data center failover.

Fixed a visual artifact where Cara 3 video frames occasionally displayed random noise.

API changes

[1]

Improvements

API gateway guide

added documentation and an example repository for routing Anam SDK traffic through your own API Gateway server. View on GitHub.

Introducing Anam Agents

Build and deploy AI agents in Anam that can engage alongside you.

With Anam Agents, your Personas can now interact with your applications, access your knowledge, and trigger workflows directly through natural conversation. This marks Anam’s evolution from conversational Personas to agentic Personas that think, decide, and execute.

Knowledge Tools

Give your Personas access to your company’s knowledge. Upload documents to the Lab, and they’ll use semantic retrieval to find and integrate the right information into responses, from product docs to internal manuals. Docs for Knowledge Base

Client Tools

Personas can now control your interface in real time. They can open checkout pages, display modals, navigate to specific sections, or update UI states creating guided, voice-driven experiences that feel effortless for users. Docs for Client Tools

Webhook Tools

Connect your Personas to external APIs and services. They can check order status, create support tickets, update CRM records, or fetch live data from your systems. Configure endpoints, headers, and response types directly in the Lab. Docs for Webhook Tools

Intelligent Tool Selection

Each Persona’s LLM determines when to call a tool based on user intent, not scripts. If a user asks for an order update, the Persona knows to fetch data. If they request a demo, it books one instantly.

You can create and manage tools on the new Tools page in the Lab and attach them to any Persona from the Build page.

Anam Agents are available today in beta for all Anam users: https://lab.anam.ai/login

Lab changes

[8]

Improvements

Cartesia Sonic-3 voices: the most expressive TTS model.

Voice modal with expanded options, support for 50+ languages, voice samples. Added Cartesia TTS provider as the default.

Session reports now work for custom LLMs

Fixes

Prevented auto-logout when switching contexts.

Fixed race conditions in cookie handling

Resolved legacy session token issues

Removed voices that were issue prone

Player and streaming: corrected aspect ratios for mobile devices.

Persona changes

[4]

Improvements

Deepgram Flux support for turn-taking (still using Whisper for transcription)

Deepgram Flux: https://deepgram.com/learn/introducing-flux-conversational-speech-recognition

Server-side optimisation to reduce GIL-contention and reduce latency

Server-side optimisation to reduce connection time

Fixes

Bug-fix to stop dangling LiveKit connections

Research

[1]

Improvements

Our first open-source library!

Metaxy is a metadata layer for multi-modal Data and ML pipelines that tracks feature versions, dependencies, and data lineage across complex computation graphs.

https://anam-org.github.io/metaxy/main/#3-run-user-defined-computation-over-the-metadata-increment

Session Analytics

Once a conversation ends, how do you review what happened? To help you understand and improve your Persona's performance, we're launching Session Analytics in the Lab. Now you can access a detailed report for every conversation, complete with a full transcript, performance metrics, and AI-powered analysis.

Full Conversation Transcripts. Review every turn of a conversation with a complete, time-stamped transcript. See what the user said and how your Persona responded, making it easy to diagnose issues and identify successful interaction patterns.

Detailed Analytics & Timeline. Alongside the transcript, a new Analytics tab provides key metrics grouped into "Transcript Metrics" (word count, turns) and "Processing Metrics" (e.g., LLM latency). A visual timeline charts the entire conversation, showing who spoke when and highlighting any technical warnings.

AI-Powered Insights. For a deeper analysis, you can generate an AI-powered summary and review key insights. This feature, currently powered by gpt-5-mini, evaluates the conversation for highlights, adherence to the system prompt, and user interruption rates.

You can find your session history on the Sessions page in the Lab. Click on any past session to explore the new analytics report. This is available today for all session types, except for LiveKit sessions. For privacy-sensitive applications, session logging can be disabled via the SDK.

Lab changes

[2]

Improvements

Improved Voice Discovery.

The Voices page has been updated to be more searchable, allowing you to preview voices with a single click, and view new details like gender, TTS-model and language.

Fixes

Fixed share-link session bug.

Fixed bug of share-link sessions taking an extra concurrency slot.

Persona changes

[4]

Improvements

Small improvement to connection time

Tweaks to how we perform webrtc signalling which allows for slightly faster connection times (~900ms faster for p95 connection time).

Improvement to output audio quality for poor connections

Enabled Opus in-band FEC to improve audio quality under packet loss.

Small reduction in network latency

Optimisations have been made to our outbound media streams to reduce A/V jitter (and hence jitter buffer delay). Expected latency improvement is modest (<50ms).

Fixes

Fix for livekit sessions with slow TTS audio.

Stabilizes LiveKit streaming by pacing output and duplicating frames during slowdowns to prevent underflow.

Intelligent LLM Routing for Faster Responses

The performance of LLM endpoints can be highly variable, with time-to-first-token latencies sometimes fluctuating by as much as 500ms from one day to the next depending on regional load. To solve this and ensure your personas respond as quickly and reliably as possible, we've rolled out a new intelligent routing system for LLM requests. This is active for both our turnkey customers and for customers using their own server-side Custom LLMs if they deploy multiple endpoints.

This new system constantly monitors the health and performance of all configured LLM endpoints by sending lightweight probes at regular intervals. Using a time-aware moving average, it builds a real-time picture of network latency and processing speed for each endpoint. When a request is made, the system uses this data to calculate the optimal route, automatically shedding load from any overloaded or slow endpoints within a region.

Lab changes

[2]

Improvements

Generate one-shot avatars from text prompts

You can now generate one-shot avatars from text prompts within the lab, powered by Gemini’s new Nano Banana model. The one-shot creation flow has been redesigned for speed and ease-of-use, and is now available to all plans. Image upload and webcam avatars remain exclusive to Pro and Enterprise.

Improved management of published embed widgets

Published embed widgets can now be configured and monitored from the lab at https://lab.anam.ai/personas/published.

Persona changes

[4]

Improvements

Automatic failover to backup data centres

To ensure maximum uptime and reliability for our personas, we’ve implemented automatic failover to backup data centres.

Fixes

Prevent session crash on long user speech

Previously, unbroken user speech exceeding 30 seconds would trigger a transcription error and crash the session. We now automatically truncate continuous speech to 30 seconds, preventing sessions from failing in these rare cases.

Allow configurable session lengths of up to 2 hours for Enterprise plans

We had a bug where sessions had a max timeout of 30 mins instead of 2 hours for enterprise plans. This has now been fixed.

Resolved slow connection times caused by incorrect database region selection

An undocumented issue with our database provider led to incorrect region selection for our databases. Simply refreshing our credentials resolved the problem, resulting in a ~1s improvement in median connection times and ~3s faster p95 times. While our provider works on a permanent fix, we’re actively monitoring for any recurrence.

Embed Widget

Embed personas directly into your website with our new widget. Within the lab's build page click Publish then generate your unique html snippet. This snippet will work in most common website builders, eg Wordpress.org or SquareSpace

For added security we recommend adding a whitelist with your domain url. This will lock down the persona to only work on your website. You can also cap the number of sessions or give the widget an expiration period.

API Changes

[1]

Improvements

ONE-SHOT avatars available via API

Professional and Enterprise accounts can now create one-shot avatars via API. Docs here.

Lab changes

[1]

Improvements

Spend caps

It's now possible to set a spend cap on your account. Available in profile settings.

Persona changes

[1]

Fixes

Prevent Cartesia from timing out when using slow custom LLMs.

We’ve added a safeguard to prevent Cartesia contexts from unexpectedly closing during pauses in text streaming. With slower llms or if there’s a break or slow-down in text being sent, your connection will now stay alive, ensuring smoother, uninterrupted interactions.

© 2026 Anam Labs

HIPAA & SOC-II Certified